Recent Projects

Here I provide a list of my current research projects and publications:

Characterising galaxy clusters by their gravitational potential

Galaxy clusters are the largest known gravitationally bound entities in the Universe. As such, they occupy a special position within the hierarchy of cosmic structures. For instance, their abundance as a function of mass shows an exponential cut-off at these high mass scales. Since the abundance is determined by initial conditions and the evolution of structure formation, cluster number counts allow to gain crucial insights on many of the parameters describing our Universe at large, to which their number is highly sensitive.

Moreover, inner cluster properties such as its shape and inner profile are directly linked to the cosmic structure formation history itself, as well as to the nature of dark matter, an elusive substance that fills out the universe in large amounts, but whose composition is still unknown. Combining all observables we can obtain from large cluster samples allows to gain more insights into the nature of dark matter, but also dark energy, a second enigmatic substance that is believed to accelerate the expansion of the universe. Clusters have been recognised as one of the most promising observational probes for this purpose due to their combined sensitivity to the geometry and structure growth of our cosmos.

The cosmological use of clusters very crucially relies on accurate methods to measure or map their mass (density). However, conventional approaches of characterizing clusters in terms of their mass suffer many problems. Strictly speaking, the mass of a cluster is not necessarily well-defined, but a concept that depends on overdensity radii, and in practice, is affected by various uncertainties related e.g. to equilibrium assumptions. A much better quantity to characterize both relaxed and unrelaxed clusters is the gravitational potential. In our research, we have shown that the gravitational potential can be used to characterise both the profile and the 3D shape of clusters straightforwardly and with less uncertainties.

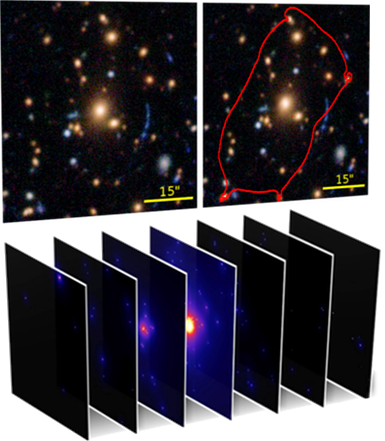

Automated detection of strong gravitational lenses

Gravitational lensing is the phenomenon of light being deflected and focused in the gravitational field of dense objects – such as galaxies or galaxy clusters. This can lead to strong distortions and even multiple images of a light source behind the clusters being visible on the sky. This not only produces spectacular shapes, but also helps for scientific purposes. By investigating how the light was deflected and distorted, we can infer how many mass must be present and how it must be distributed. Thus it offers a method to estimate the mass in the line of sight – e.g. the mass of a galaxy cluster that produces said lensing effect.

In practice, however, the difficulties start long before the modelling process: Strong lenses are rare and they need to be detected first. This means identifying essentially a needle in a haystack within large survey datasets. It turns out that this task is less trivial than it sounds. Even in the age of machine learning based object recognition, this needle is hard to find. Essentially, this because the haystack is crowded with lots of similarly looking objects. Lensed features usually have the shape of arcs, but this doesn't need to be the case. They are elongated, but can appear short if the instrument point spread function has a too large width. In various cases, lensed features cannot even be securely identified as such by experts. Their feature space just overlaps with that of many non-lensed sources, which are in fact much more common in surveys.

This has motivated us to develop an entirely new automated lens detection technique that does not look for lensed features themselves, but filters survey regions for high concentrations of bright galaxies that are indicative of massive dense structures like galaxy clusters. Based on all the light in a given area on the sky, our lens detection code EasyCritics searches for such massive structures and models the lensing magnification as a function of source redshift to obtain the so-called critical curves – which enable to identify the most promising places to look for a lens. Our algorithm also predicts the amount and distribution of line-of-sight mass in a given region, allowing for a first characterisation of lens region candidates. EasyCritics thus provides a list of lens candidates that can be followed up individually with high-resolution instruments to look for lenses in a more targeted way, and provides quantitative information on each candidate. In our recent work, we have shown that this allows for a more efficient and reliable detection of lenses, while at the same time increasing the completeness of detections because EasyCritics is able to find lenses that have been missed in earlier studies as their lensed features were too faint or unresolved. Very importantly, EasyCritics not only speeds up the analysis by reducing the need for manual post-processing, but also removes a large amount of ambiguities that are usually affecting lens detection.

Former, completed projects:

Image processing for astronomical surveys

As a part of my Bachelor studies, I have developed methods for the efficient analysis of large image datasets. This involved algorithms for image processing, object recognition and calculations on image data, both on CPU and GPU. Some of these routines have become a basis for the EasyCritics lens detection code that I am the main author of. For a fast exploration of different parameters for gravitational lens models, to be fitted to known clusters during the calibration of the EasyCritics code, I have developed an efficient, parallelized Markov-Chain Monte Carlo algorithm that exploits adaptive grid techniques to sample different realizations of the gravitational lensing potential and to find the optimal lens parameters for a light-traces-mass lens model in fractions of the time required for conventional MCMC methods.

Search for clusters of information in data using tree methods

As a small subproject during my Bachelor thesis, I extended the functionalities of a k-d tree-based detector for clustered information regions in data spaces using an overdensity criterion. Aside from several smaller improvements, I introduced the possibility to filter the targets by additional parameters of choice, improved the handling of noise and systematic uncertainties; and added a routine that automatically creates graphical representations of the selected and detected datasets.

Smaller internships or practicals:

- Modelling CMB data with CAMB (2017)

- GPU accelerated computing and its use in N-body simulations (2016)

- Practical on Numerical methods (2015)